SaaS Pricing Experiments: How to Test Price Changes Without Killing Revenue

Step-by-step guide to run safe SaaS pricing experiments: form hypotheses, test one variable on new users, monitor ARPU and churn, and scale winners.

SaaS Pricing Experiments: How to Test Price Changes Without Killing Revenue

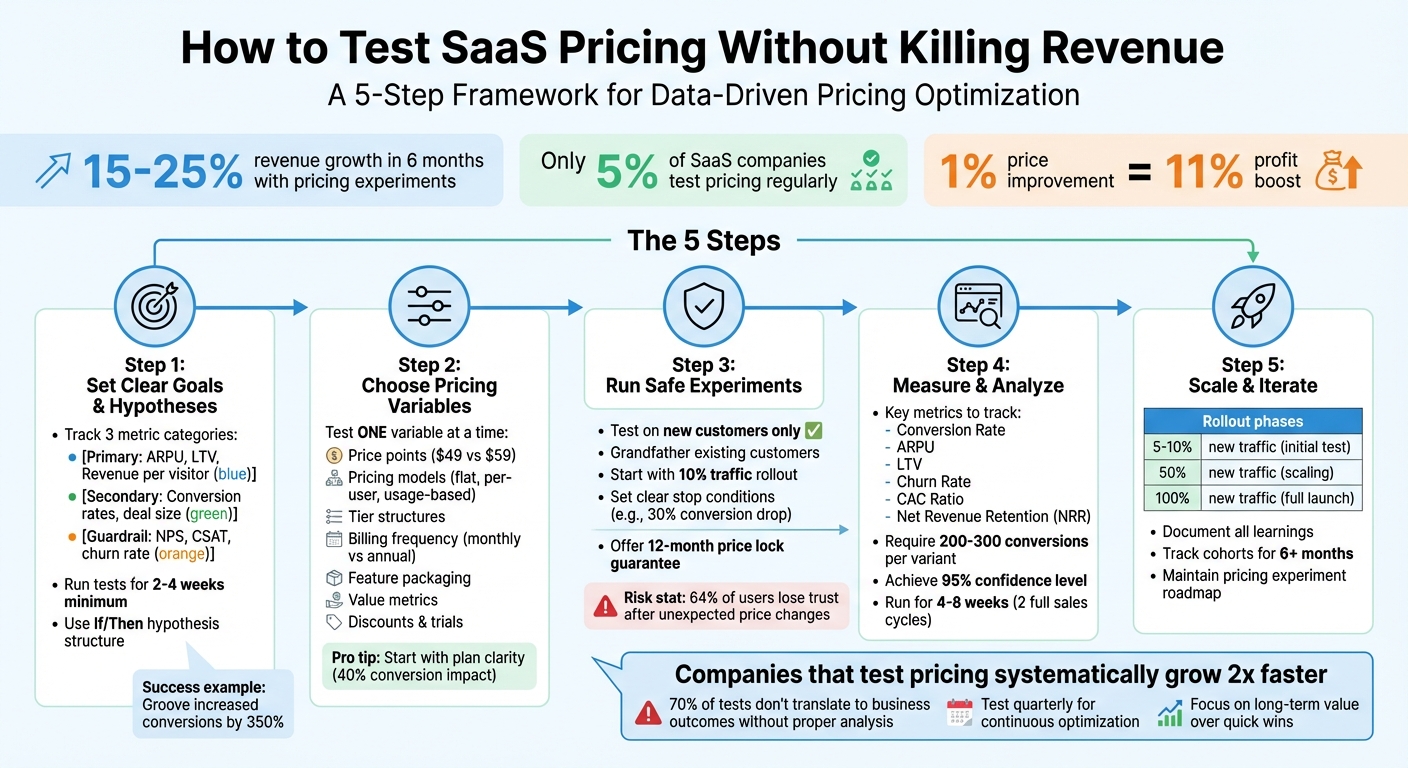

Pricing experiments can grow SaaS revenue by 15–25% in just six months. But only 5% of companies test their pricing regularly. This guide simplifies how to test price changes safely without losing customers or revenue.

Key takeaways include:

- Why pricing experiments matter: A 1% price improvement can boost profits by 11%.

- What to test: Price points, models, tiers, billing frequency, and more.

- How to test safely: Start small, test with new users only, and monitor metrics like ARPU and churn.

- Avoiding risks: Grandfather existing customers, define stop conditions, and run tests for 6–8 weeks.

This step-by-step approach ensures pricing changes are data-driven, minimizing risks and maximizing growth.

::: @figure  :::

:::

Running Pricing Experiments with Marc Boscher of Unito

::: @iframe https://www.youtube.com/embed/WJOewZ3vOc4 :::

sbb-itb-0499eb9

Step 1: Set Clear Goals and Hypotheses

Before you start tweaking your pricing, it’s crucial to establish specific targets. Goals like "increase revenue" are too vague and don’t provide a clear way to measure success. Instead, focus on measurable objectives that can clearly indicate whether your pricing experiment worked or not.

How to Set Revenue-Focused Goals

To get a full picture of how pricing changes affect your business, track 3-5 key performance indicators (KPIs). These should fall into three distinct categories:

-

Primary Metrics: These focus on your main financial outcomes. Examples include revenue per visitor, Average Revenue Per User (ARPU), or Customer Lifetime Value (LTV). For instance, you might aim to increase ARPU from $85 to $102 within 90 days.

-

Secondary Metrics: These explain why your primary metric shifted. Keep an eye on metrics like conversion rates, average deal size, trial-to-paid conversion rates, or expansion revenue. For example, if ARPU rises but conversion rates drop significantly, it could suggest your price hike was too steep.

-

Guardrail Metrics: These ensure your test doesn’t harm your brand or customer relationships. Metrics like Net Promoter Score (NPS), customer satisfaction (CSAT), churn rate, or support ticket volume act as safeguards. For example, a revenue boost paired with a 15-point drop in NPS might indicate a deeper issue.

To determine the right sample size for your test, use this formula: Required Confidence ÷ Expected Effect Size ÷ Base Conversion Rate. For instance, if your base conversion rate is 5% and you’re aiming for a 1% improvement at 95% confidence, you’d need around 3,800 visitors per variant. Tests should run for at least 2–4 weeks to account for any seasonal or weekly trends.

Once your goals are set, the next step is to create specific, testable hypotheses.

How to Write Pricing Hypotheses

A solid hypothesis is built around your goals and follows an "If/Then" structure. Use this template:

"Increasing [Plan Name] price from $[X] to $[Y] will increase [Metric] by [Z]% with less than [A]% drop in [Guardrail Metric]."

Here are some real-world examples of this approach in action:

-

In 2013, Groove, led by CEO Alex Turnbull, found that their complex pricing model had a low conversion rate of 1.11%. They hypothesized: "Simplifying to one single price for new signups will increase conversions by at least 200%." The result? A 350% increase in conversions over their previous model [10].

-

Planio, with growth marketer Thomas Carney, noticed that 75% of customers were in their lowest pricing tiers despite varying usage levels. They hypothesized: "Creating new pricing segments based on actual user behavior will increase average MRR per new customer by 50%." Testing this on new signups alone led to a 100% increase in average Monthly Recurring Revenue per new customer [10].

-

In 2021, Atlassian tested multiple cloud pricing points with small customer segments. Their hypothesis aimed to maintain high customer satisfaction while boosting cloud adoption. The result? A 50% increase in cloud customers without compromising satisfaction [8].

Clearly define your success criteria - such as requiring a 95% confidence level - before starting your test. This avoids the temptation to justify ambiguous results later on. Keep in mind that even a small pricing improvement can have a big impact. For instance, a 1% improvement in pricing can drive an 11% increase in operating profit, outpacing similar gains in acquisition (7.8%) or retention (6.7%) [8].

"Pricing is too important to guess at. Yet traditional approaches to pricing optimization are slow, risky, and based more on opinion than evidence."

– Tara Minh, Operation Enthusiast, Rework [9]

Lastly, remember to account for segment-specific behavior when crafting hypotheses. Price sensitivity can vary widely between small businesses and enterprise clients, so it’s wise to create separate hypotheses for each customer segment [9].

Step 2: Choose Which Pricing Variables to Test

Now that you've developed clear, revenue-focused hypotheses in Step 1, it's time to zero in on the specific pricing variable you want to test. The choice should align with the revenue and conversion goals you've already outlined. The golden rule? Test one variable at a time. If you test too many variables simultaneously, you risk not knowing which change is driving the results.

Pricing Variables You Can Test

Here are seven pricing elements you can experiment with:

- Price points: Adjust specific dollar amounts (e.g., $49 vs. $59) to find the sweet spot between conversion rates and Average Revenue Per User (ARPU).

- Pricing models: Experiment with flat-rate, per-user, usage-based, or hybrid models. Hybrid models, for instance, have been shown to drive up to 17% higher growth rates [5].

- Tier structures: Modify existing tiers or introduce new ones (like "Pro" or "Enterprise") to better cater to customer segments. For example, Zapier boosted its average contract value by 35% by introducing higher-tier plans with expanded features and adjusting limits on lower tiers [4].

- Billing frequency: Compare monthly versus annual billing options. Highlighting annual pricing often increases Average Contract Value (ACV).

- Feature packaging: Test bundling features together versus offering them à la carte.

- Value metrics: Identify what your customers value most - seats, data storage, API calls, or transactions processed. For instance, Intercom shifted from "per user" pricing to "per contact" pricing, leading to better conversion rates and retention [7].

- Discounts and trials: Experiment with trial lengths, freemium conversion points, or promotional discounts. Slack, for instance, tested a "Fair Billing Policy" that only charged for active users, helping them secure 87% of Fortune 100 companies while maintaining a 93% renewal rate [5].

"Pricing is the most important lever that SaaS companies have, but almost none of them systematically optimize it." – Patrick Campbell, CEO of ProfitWell [4]

Focus on impact over convenience. Start with plan architecture and clarity - this has the most influence on conversion rates. In fact, unclear pricing plans can slash conversions by as much as 40% [2]. Once that's tackled, shift your attention to strategies like value anchoring, positioning, discounts, and finally, price point adjustments.

After selecting the pricing element to test, plan the timing carefully to maximize results.

When to Test New Pricing Models or Add Tiers

Timing is everything when it comes to testing new pricing models or introducing additional tiers. The ideal moment? Right after a major product enhancement. Wait about 4–6 weeks post-launch to give customers time to experience the added value before encountering new pricing [11]. For instance, Asana rolled out significant workflow automation features and waited 60 days before testing new pricing models with fresh customer cohorts [11].

For B2B SaaS, budget cycles also play a key role. Since 74% of B2B software purchases are made in Q4 or Q1 [11], these periods are perfect for testing as companies evaluate or refresh their budgets. Industry-wide changes can also signal good opportunities for testing.

If the majority (75% or more) of your customers are stuck in the lowest pricing tier despite varying usage levels, it might be time to experiment with a mid-tier or "decoy" plan to drive upgrades.

Stick to testing new pricing models or tiers with new customer cohorts only. This approach avoids disrupting current customers and has been used in 75% of successful SaaS pricing tests [4]. By timing your tests strategically and focusing on one variable at a time, you'll gain clearer insights into what works and what doesn’t.

Step 3: Run Pricing Experiments Without Disrupting Revenue

Testing pricing can feel risky, but a structured approach helps protect both your revenue and customer relationships. By using a clear framework and carefully managing the rollout, you can test new price points without jeopardizing your bottom line or losing customer trust.

Pricing Experiment Methods

One of the simplest and most effective ways to test pricing is through A/B testing. Here’s how it works: you divide new users into two groups - one sees your current pricing (the control), and the other sees a new pricing structure (the variant). This method works especially well for testing single variables, like moving a price from $49 to $59. It’s a great fit for SaaS businesses with moderate traffic, as it provides straightforward, actionable insights.

How to Roll Out Pricing Tests Safely

Once you’ve chosen your testing method, these steps can help you minimize risks and maintain customer trust:

-

Test on New Customers Only: Keep your experiments limited to users who sign up after a specific date. This avoids surprising your existing customers, which could damage trust or increase churn. Surveys show that 64% of users lose trust in a brand after unexpected price changes [5].

-

Grandfather Existing Customers: If you decide to roll out new pricing, consider grandfathering your current users. This means keeping their original rates either indefinitely ("hard grandfathering") or for a set period ("soft grandfathering"). If changes to existing customers are unavoidable, give at least 60 days’ notice to soften the impact [4].

-

Use Staged Rollouts: Instead of directing half your traffic to a new price right away, start small. For example, send only 10% of new signups to the test price during the first 48 hours. This allows you to monitor for any issues - like billing errors or unexpected drops in conversions - before scaling up.

-

Ensure Consistent Pricing Within Accounts: Avoid pricing inconsistencies within the same organization. For instance, if one team member sees a different price than another, it can cause confusion and erode trust. In fact, 81% of customers say they would leave a company they perceive as deceptive about costs [2].

-

Set Clear Stop Conditions: Before launching your test, define the conditions that would make you halt it immediately. Examples include a conversion drop exceeding 30% or a surge in negative feedback on social media. Have a rollback plan ready to quickly reverse any changes if needed [5].

-

Isolate Experiment Logic from Core Billing: Keep your experiment code separate from your main billing system. This prevents billing errors and keeps your core system clean, even if you’re running multiple test variants.

-

Offer a Price Lock Guarantee: Reassure new customers by guaranteeing their test price for at least 12 months, even if prices increase later. This builds confidence during the sign-up process and shows your commitment to transparent pricing - something that 73% of customers value [2].

Step 4: Measure Results and Analyze Impact

Once your pricing tests are live, the next step is to evaluate their effectiveness through precise measurement. This is where the real challenge begins: identifying the right metrics and interpreting what they reveal. Without thorough analysis, pricing changes can lead to unintended revenue pitfalls.

Which Metrics to Track

Focus on metrics that directly reflect the impact of your pricing experiment on your business. While statistical significance is important, prioritize economic significance - does the revenue impact justify the effort? It's worth noting that 70% of statistically significant test results fail to translate into meaningful business outcomes [15].

Here are the key metrics to monitor:

- Conversion Rate: Tracks how pricing influences a prospect's decision to buy, particularly during critical stages like trial-to-paid conversions.

- Average Revenue Per User (ARPU): Helps assess whether increased prices balance out any potential drop in sales volume.

- Lifetime Value (LTV): Shows the total value a customer contributes over their relationship with your business, ensuring short-term gains don't harm long-term profitability.

- Churn Rate: Measures customer and revenue loss, signaling if price changes are driving cancellations at an unsustainable rate.

- CAC Ratio: Evaluates the cost of acquiring customers compared to the revenue they generate, highlighting whether pricing adjustments improve acquisition efficiency.

- Net Revenue Retention (NRR): Tracks growth from existing customers through upgrades and expansions, offering insights into how pricing tiers encourage scaling.

| Metric | Why It Matters in Pricing Experiments |

|---|---|

| Conversion Rate | Reveals how price impacts a prospect's willingness to buy, especially at key stages like trial-to-paid conversions [14][3]. |

| ARPU | Indicates if higher prices offset potential volume losses by measuring average revenue per user [13][14]. |

| LTV (Lifetime Value) | Predicts the long-term value customers bring, ensuring short-term pricing gains don't harm overall profitability [13][14]. |

| Churn Rate | Tracks customer and revenue loss, acting as a red flag if price increases lead to excessive cancellations [14][12]. |

| CAC Ratio | Measures customer acquisition cost relative to revenue, showing if pricing improves payback efficiency [13]. |

| Net Revenue Retention (NRR) | Reflects growth from upgrades and expansions, highlighting how pricing tiers encourage scaling [13]. |

Together, these metrics provide a comprehensive view of both immediate responses and long-term revenue trends.

"The problem isn't that SaaS companies don't measure their pricing tests - it's that they measure too narrowly." – Patrick Campbell, Founder, ProfitWell [13]

How to Analyze Test Results for Statistical Significance

Once you've gathered your data, the next step is to analyze it rigorously. Pricing tests should run for at least two full sales cycles (typically 4–8 weeks) to capture customer behavior and account for weekly fluctuations. Each test variant should achieve at least 200–300 conversions to ensure reliable results [3][12][15]. Inadequate sample sizes are a common issue - 57% of A/B tests fail to produce conclusive results for this reason [5].

Use a statistical calculator to verify that your sample size meets the minimum threshold. Aim for a 95% confidence level, meaning you can be 95% sure that your observed results are due to the pricing change and not random chance [16]. Resist the temptation to check results prematurely; allow the experiment to run its full duration to avoid false positives.

Finally, don't just focus on the customers who converted or stayed. Pay close attention to those who dropped out of the funnel. Understanding their behavior can reveal price sensitivity and help you avoid survivor bias in your analysis [13].

Step 5: Scale and Iterate on Successful Pricing Experiments

Once you've identified a winning pricing experiment, the real challenge is scaling it without jeopardizing your current revenue streams. A successful test doesn’t automatically translate to success on a larger scale - you’ll need a careful plan to expand while ensuring your existing customers and revenue remain stable.

How to Implement Winning Pricing Changes

Begin with a small-scale rollout, targeting 5–10% of new traffic for the winning variant [3]. This limited approach allows you to identify and fix technical issues or unexpected spikes in support tickets before they escalate. Keep a close eye on daily indicators, like trial-to-paid conversion rates and demo requests, as these provide early warnings of potential revenue disruptions - well before metrics like Monthly Recurring Revenue (MRR) or churn are affected [3].

Stick to your pre-defined stop conditions for a quick rollback if necessary. For example, if conversion rates drop by more than 30% or support tickets double, revert immediately [3]. Tools like feature flags or dedicated monetization infrastructure make it easier to separate pricing logic from your core code, enabling fast and smooth reversions when needed [6].

When scaling, grandfather existing customers to protect your current revenue while applying the new pricing only to new users [3]. If you need to communicate price changes, focus on the added value - like new features, better outcomes, or improved customer support - rather than justifying the cost increase. This value-driven messaging can improve customer acceptance rates by up to 35% [7].

Before launching, ensure all internal teams - sales, support, and customer success - are aligned. Provide clear messaging and documentation so they can confidently address customer questions about the new pricing structure [3]. To measure the long-term effects, use cohort tracking to monitor metrics like Lifetime Value (LTV) and retention over at least six months [3].

| Rollout Phase | Target Audience | Goal | Risk Mitigation |

|---|---|---|---|

| Initial Test | 5–10% New Traffic | Validate Hypothesis | Limit revenue exposure with small sample size |

| Scaling | 50% New Traffic | Confirm Statistical Significance | Monitor support ticket trends |

| Full Launch | 100% New Traffic | Maximize New Revenue | Grandfather existing customers |

| Expansion | Existing Customers | Increase ARPU | Use value-based messaging and early lock-in offers |

Once the new pricing is successfully scaled, it’s crucial to document your findings for future reference and ongoing improvement.

Document What You Learn for Future Tests

After every pricing experiment, take the time to record your insights. This documentation will serve as a valuable resource for planning future tests. Capture key details like the hypothesis, variables tested, statistical significance, and customer feedback in a centralized "Experiment Brief" [3]. This approach not only builds institutional knowledge but also helps avoid repeating unsuccessful strategies or overlooking effective ones.

Maintain a "Pricing Experiment Roadmap" to track every test, including the variables, outcomes, and numerical results [5]. Don’t just focus on the wins - document what didn’t work and why. Companies that formalize their pricing strategies and conduct regular experiments (at least annually) report 10–15% higher growth rates [17].

Conclusion

Pricing experiments don’t have to jeopardize your revenue. By sticking to a structured process - defining clear hypotheses, testing with segmented audiences, rolling out changes incrementally, and tracking the right metrics - you can fine-tune your SaaS pricing strategy while safeguarding your financial health. Think of pricing as a dynamic element of your business that requires ongoing adjustments, not a set-it-and-forget-it decision.

Research shows that companies conducting disciplined pricing experiments often achieve 15–25% revenue growth within six months [3]. Additionally, even a 1% improvement in price optimization can lead to an 11% increase in profits [4]. Yet, only 5% of SaaS companies consistently test their pricing, leaving significant revenue potential unrealized [4]. As Patrick Campbell, CEO of ProfitWell, aptly states:

"Pricing is the most important lever that SaaS companies have, but almost none of them systematically optimize it" [4].

The most impactful pricing experiments focus on long-term value over quick wins. Metrics like Customer Lifetime Value (LTV) and Net Revenue Retention (NRR) should take precedence over short-term conversion rates. Lower price points may boost conversions initially but often lead to higher churn and less expansion revenue [18]. Use grandfathering to protect existing customers, run tests over at least two full sales cycles to capture meaningful patterns, and ensure a 95% confidence level before implementing permanent changes [18][3].

Key Takeaways

Start by improving packaging and plan clarity before tweaking price points - unclear plans can reduce conversions by 40% [3][2]. Begin testing with new signups or specific customer segments to limit risk, and roll out changes gradually, starting with just 5–10% of traffic to identify potential technical issues early [3]. Track both leading metrics, like trial-to-paid conversion, and lagging ones, such as 6-month LTV, to confirm that your changes drive sustainable growth rather than short-term spikes [3][1].

Keep a centralized roadmap for your experiments to ensure continuous improvement. By maintaining a quarterly testing schedule and committing to data-driven decisions, you can join the ranks of SaaS companies that grow twice as fast through systematic pricing strategies [4]. These practices highlight that thoughtful, data-backed pricing is a cornerstone of sustainable SaaS growth.

FAQs

::: faq

What’s the safest first pricing test to run?

The safest way to dip your toes into pricing tests is with a small, controlled A/B test. Focus on just one variable - like tweaking prices slightly or adjusting a feature bundle. Start with a clear hypothesis, such as testing whether a 10% price increase on your premium tier affects conversion rates. Then, track both the numbers and customer feedback to see what changes. By applying solid statistical methods and segmenting your audience carefully, you can reduce risks and gather insights that will guide your future pricing decisions. :::

::: faq

How do I pick the right test segments?

To pick the right test segments, begin by pinpointing specific customer groups. These can be categorized by usage habits, purchase history, or engagement levels. By running pricing tests within these smaller, well-defined groups, you can gather more accurate data while minimizing potential risks.

Pay special attention to customer categories, such as enterprise clients versus small-to-medium businesses (SMBs). Keep an eye on essential metrics like trial conversion rates and customer lifetime value (CLV). These insights will help you fine-tune your strategy and steer clear of any negative customer reactions. :::

::: faq

How do I avoid pricing confusion for teams and accounts?

To avoid confusion around pricing, focus on clear segmentation, effective guardrails, and open communication. Segmentation helps tailor pricing to different customer groups, ensuring it feels fair and appropriate. At the same time, being transparent about pricing experiments helps build trust with your audience.

When designing these experiments, treat them as configurations instead of hard-coded changes. This approach, combined with tools that allow for plan overrides and leverage customer metadata, can significantly reduce errors. Finally, track metrics like conversion rates and customer trust to ensure your pricing adjustments are clear and cause minimal disruption. :::

Go deeper than any blog post.

The full system behind these articles—frameworks, diagnostics, and playbooks delivered to your inbox.

No spam. Unsubscribe anytime.