How to Run a Pricing Experiment Without Losing Customers

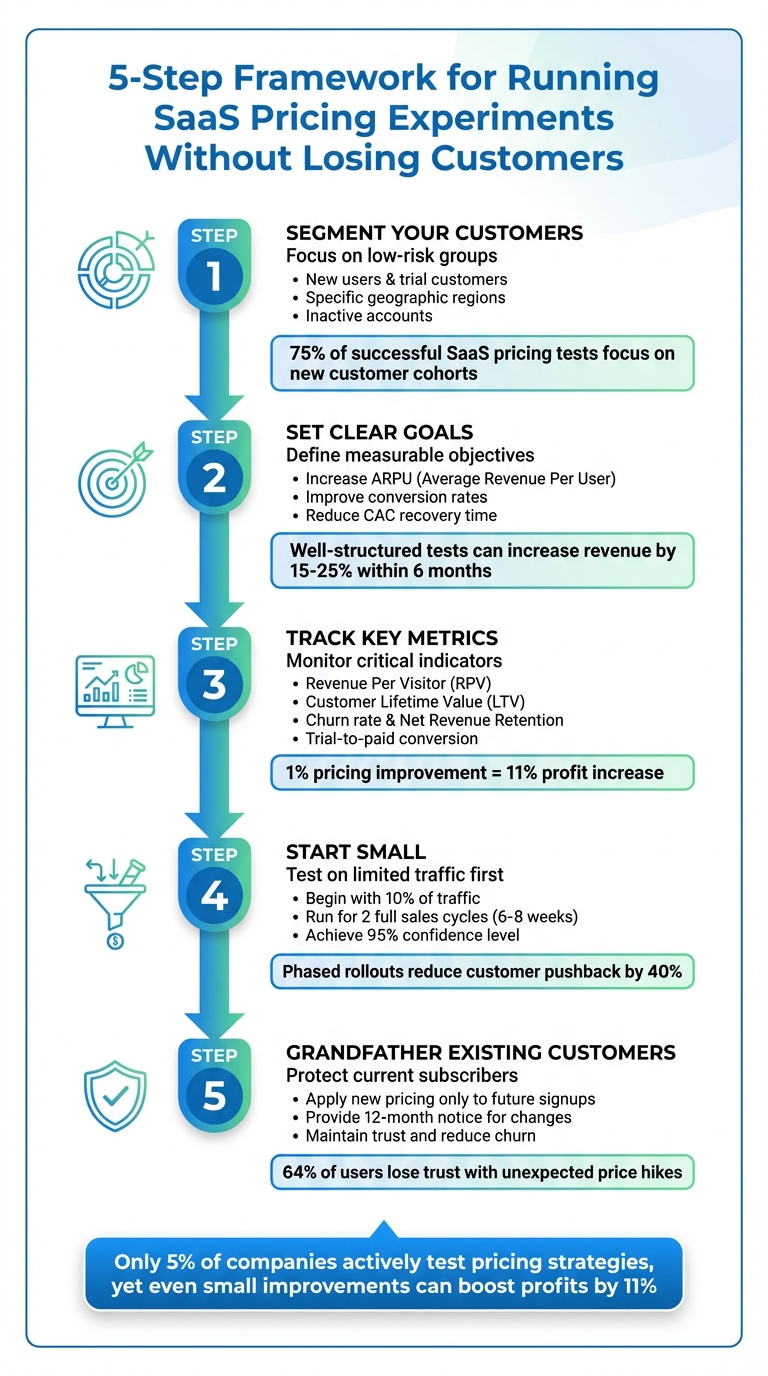

Test SaaS pricing on low-risk segments, grandfather existing users, and use clear metrics to raise revenue without losing customers.

How to Run a Pricing Experiment Without Losing Customers

Pricing experiments can boost SaaS revenue without alienating customers - if done carefully. Here's the key: test pricing changes on low-risk groups (like new users) and use clear communication to maintain trust. Even a 1% improvement in pricing can increase profits by 11%, yet only 5% of companies actively test their pricing strategies.

To get started:

- Segment your customers: Focus on new users, specific regions, or inactive accounts to reduce risk.

- Set clear goals: Define objectives like increasing Average Revenue Per User (ARPU) or improving conversion rates.

- Track metrics: Monitor revenue per visitor, churn rate, and customer lifetime value (LTV).

- Start small: Test changes on a limited group (e.g., 10% of traffic) before scaling up.

- Grandfather existing customers: Protect current subscribers from pricing changes to avoid churn.

5-Step Framework for Running SaaS Pricing Experiments Without Losing Customers

Mastering Data-Driven Pricing: Effective Pricing Experiments for SaaS

sbb-itb-0499eb9

How to Segment Customers to Reduce Risk

Treating every customer the same is a recipe for pricing disaster. Segmentation acts as your safety net, allowing you to test pricing changes on less critical groups while safeguarding your main revenue streams. Done correctly, it transforms a potential company-wide failure into a manageable, low-stakes experiment.

The numbers speak for themselves. 75% of successful SaaS pricing tests focus solely on new customer cohorts. Additionally, businesses that take a more refined approach to pricing segmentation report 14-26% higher annual recurring revenue (ARR) compared to those sticking with basic tiered models. Why? Because testing on the wrong group can erode trust, while targeting the right segment yields clean, actionable data without major repercussions.

Finding Low-Risk Customer Segments

New customers and trial users are your safest options. These groups come with no previous pricing expectations, so they’re less likely to feel caught off guard by changes. For example, in May 2025, Zapier tested premium pricing for advanced multi-step Zaps by targeting just 5% of new signups. While conversions dropped by 4%, the average revenue per user (ARPU) rose enough to increase revenue from that group by 17%. This strategy protected existing users while delivering useful insights.

Geographic and channel segmentation is another effective way to reduce risk. By limiting tests to specific regions or acquisition channels, you can avoid price comparisons across customer groups. HubSpot’s Service Hub pricing revision is a great case in point. The company tested the changes in three mid-market sales territories with similar customer profiles. Over a 60-day period, they achieved statistically significant results while minimizing risk.

Grandfathering existing subscribers is a tried-and-true method to preserve core revenue and maintain trust. Keeping current customers on their original pricing is often seen as the "gold standard" for avoiding churn during pricing changes. Phased rollouts, which introduce pricing updates gradually, have also been shown to reduce customer pushback by 40% compared to immediate, company-wide changes.

Here’s a quick breakdown of segmentation types, their descriptions, and the associated risk levels:

| Segmentation Type | Description | Risk Level |

|---|---|---|

| New Customer Cohorts | Testing only on users signing up after a set date. | Low |

| Geographic Isolation | Testing in specific regions or countries. | Low |

| Feature-Based | Testing pricing on users of specific new tools. | Medium |

| Existing Customers | Testing price increases on current subscribers without grandfathering. | High |

Using Customer Data to Build Test Cohorts

Once you’ve identified low-risk segments, the next step is to fine-tune these groups using detailed customer data. Start by grouping customers based on signup dates and pricing tiers to uncover patterns and compare performance before and after the test.

Dive deeper into behavioral and usage data to understand how customers perceive value. Metrics like feature adoption, usage frequency, and volume (e.g., API calls, storage, or seats) can highlight areas to test without disrupting the overall experience. For B2B companies, firmographic data - such as company size and industry - can help measure how pricing impacts different business categories.

In 2025, Atlassian tested a new pricing model for Jira by targeting mid-sized companies (50-200 employees) within their self-serve channel. This allowed them to fine-tune their grandfathering approach and messaging before rolling out the changes on a larger scale. Similarly, acquisition data helps identify price-sensitive groups for targeted experiments.

Risk mitigation data adds another layer of precision. By focusing on "low-risk" segments like new users, specific regions, or inactive accounts, you can avoid alienating your core, high-value customers. Companies that employ behavioral segmentation in their pricing strategies have seen a 13% reduction in churn. This shows that using data to guide your decisions doesn’t just reduce risk - it also delivers measurable results.

Setting Goals and Metrics for Your Experiment

Once you've pinpointed low-risk segments, the next step is to set clear goals and metrics. Without a clear direction, pricing experiments can easily turn into guesswork. Knowing exactly what you aim to achieve and how to measure success is crucial. The difference between a well-executed test and an expensive misstep often lies in defining the right objectives and tracking meaningful metrics.

Defining Business Objectives

Start by crafting a specific, testable pricing hypothesis. Use this structure: "If we [pricing change], then [customer segment] will [behavior change] because [underlying belief]." For example: "If we increase our Pro tier price from $49 to $59 per month, then new SMB customers will convert at similar rates because the added features justify the increase." This hypothesis should align directly with your broader company goals, such as achieving a 25% revenue increase.

"Write down the bigger goal and work backward. Map how your pricing experiment is going to get you closer to that goal and define the KPIs to measure its success."

– Sumyukthaa Sankar, Product Marketer, Chargebee

Common objectives in pricing experiments include boosting Average Revenue Per User (ARPU), improving trial-to-paid conversion rates, or reducing the time required to recover Customer Acquisition Cost (CAC). Well-structured pricing tests with measurable goals can increase revenue by 15–25% within six months.

Before launching your experiment, set a minimum detectable effect - a threshold for the smallest change that matters to your business (typically 10–15% improvement). Also, establish stop conditions, such as halting the experiment if conversion rates drop by 30%, to protect your revenue.

Once your objectives are clear, the next step is identifying the right metrics to track success.

Choosing the Right Metrics to Track

Metrics are your compass - they validate your hypothesis and show how pricing changes affect your business. Two key metrics to focus on are Revenue Per Visitor (RPV) and Customer Lifetime Value (LTV). RPV highlights immediate monetization efficiency, while LTV forecasts long-term profitability. For SaaS companies, LTV should ideally be at least three times the CAC, with acquisition costs recovered within 12 months.

Pay attention to leading indicators like trial-to-paid conversion rates and demo requests. These early signals can help you spot potential issues before revenue data matures. For example, freemium models typically see conversion rates of 15–25%, while time-limited trials range from 25–40%.

Don't ignore lagging indicators like churn rate and Net Revenue Retention (NRR). These metrics reveal the long-term effects of pricing changes on customer satisfaction and growth. A small price increase might boost short-term revenue but could lead to higher churn months later if not carefully managed.

To safeguard against unintended consequences, track guardrail metrics like support ticket volume and customer sentiment scores (NPS/CSAT). These metrics ensure pricing changes don't damage customer trust or overwhelm your operations. Even minor improvements in pricing strategy can lead to an 11% profit increase - but only if customers remain engaged.

Here’s a quick summary of key metrics and why they matter:

| Metric | What It Measures | Why It Matters |

|---|---|---|

| Revenue Per Visitor (RPV) | Total revenue ÷ Unique visitors | Shows how efficiently you monetize traffic |

| Customer Lifetime Value (LTV) | (ARPU × Gross Margin %) ÷ Monthly churn | Predicts long-term profitability |

| Net Revenue Retention (NRR) | ((Starting Revenue + Expansion – Churn) ÷ Starting Revenue) × 100 | Tracks growth from existing customers |

| Trial-to-Paid Conversion | (Paid Customers ÷ Trial Signups) × 100 | Indicates if pricing deters lead conversion |

| Churn Rate | Percentage of customers who cancel | Signals whether pricing exceeds customer willingness to pay |

For reliable results, aim for a 95% confidence level and 80% statistical power in your experiments. This typically requires at least 100 conversions per variant for B2C products and 30–50 conversions for high-ticket B2B offerings. Run tests for at least two full sales cycles to account for seasonal trends and shifts in buyer behavior.

Selecting Variables and Testing Methods

With your goals and metrics in place, the next step is deciding what to test and how to test it. Choosing pricing variables carefully is key to gaining clear, actionable insights. The golden rule? Test one element at a time. Changing multiple variables simultaneously makes it impossible to pinpoint which specific change influenced the results. With that in mind, let’s dive into the specifics.

Which Pricing Variables to Test

When testing pricing, follow a logical sequence: start with plan architecture and packaging clarity, then move to value anchoring and psychological positioning, followed by discount strategies, and finally price points. This order is crucial. For example, testing whether $49 or $59 works better is pointless if customers don’t even understand which plan suits their needs.

Begin by focusing on plan architecture. This includes experimenting with plan names (like "Starter/Pro" vs. "Basic/Premium"), how features are organized, and how tiers are positioned. Next, evaluate pricing model structures - should you use per-user pricing, usage-based pricing, or flat-rate subscriptions? Once that’s clear, explore discount strategies. This could mean testing annual versus monthly billing discounts or volume-based pricing tiers. Finally, test specific price points within the structure you’ve established.

Why isolate variables? Testing one at a time ensures statistical clarity and avoids the pitfalls of multivariate tests, which often require 3–4 times more traffic and frequently fail to reach conclusive results due to sample size limitations (about 57% of A/B tests fall short this way). By keeping everything else constant - features, messaging, checkout flow - you can confidently attribute any changes in conversion or revenue to the specific pricing tweak. This approach also helps you monitor for unintended consequences, like sudden churn spikes, without adding unnecessary complexity.

A/B Testing vs. Multi-Armed Bandit Testing

Once you’ve identified the variables to test, the next decision is how to test them. Two popular methods are A/B testing and multi-armed bandit testing. Each has its strengths and weaknesses, and the choice depends on your goals and resources.

| Method | Description | Advantages | Disadvantages | SaaS Suitability Level |

|---|---|---|---|---|

| A/B Testing | Split traffic into two groups and test one variable | Easy to set up and delivers clear, interpretable results | Needs a larger sample size and may risk brand reputation if customers notice price variations | Best for early-stage pricing experiments and clear comparisons |

| Multi-Armed Bandit | Dynamically allocates traffic to better-performing options in real time | Faster optimization and minimizes opportunity cost during testing | Requires complex setup and real-time monitoring tools | Ideal for high-traffic SaaS products with advanced infrastructure |

For most SaaS businesses, A/B testing is the go-to method, especially for first-time pricing experiments. It’s straightforward, requires less infrastructure, and produces clean, easy-to-interpret data. On the other hand, multi-armed bandit testing is better suited for companies with high traffic volumes that can handle the complexity of real-time adjustments. Whichever method you choose, aim for at least 400+ conversions per variant to ensure statistical significance, especially for moderate changes (10–20%).

Running Pricing Experiments Without Disrupting Customers

Once you've nailed down your segmentation and metrics, the next step is running experiments in a way that minimizes disruption. This phase is all about translating plans into action while keeping things under control. A pricing experiment doesn’t mean applying new rates across your entire customer base - it’s about managing exposure carefully and spotting early warning signs before any issues grow.

Testing on New or Low-Risk Customers First

The safest way to experiment with pricing? Start with new customers. Apply the updated rates only to those who sign up after a specific date. This ensures your existing customers - and your current annual recurring revenue - remain unaffected. Since new customers don’t know your old pricing, they’re less likely to feel it’s unfair, reducing the risk of churn.

It’s also critical to maintain trust by grandfathering existing customers. Take HubSpot as an example: when they restructured pricing, they grandfathered all current users and gave a 12-month notice before changes kicked in. This approach helped them avoid significant churn, even with price increases. Studies show that 64% of users lose trust in a brand when faced with unexpected price hikes.

Beyond targeting new customers, you can segment your tests by geography, acquisition channel, or company size. For instance, testing a price increase in a secondary market first can help protect your primary revenue streams. Similarly, segmenting by acquisition channel - such as paid ads versus organic search - can reveal how different groups react to pricing changes. Just remember: if you’re testing B2B pricing, ensure consistency at the account level. Everyone from the same company domain should see the same price.

| Testing Method | Risk Level | Best For |

|---|---|---|

| New Customer Cohorts | Low | Protecting existing brand reputation and core revenue |

| Grandfathering | Low | Retaining long-term customers during a major price hike |

| Segment-Based (Geo/Industry) | Medium | Testing regional price elasticity or industry-specific value |

| Staged Rollout (10% Traffic) | Medium | Identifying technical bugs or immediate conversion issues |

Start small. Roll out your experiment to just 10% of your traffic for the first 48 hours. This limited exposure lets you identify technical glitches, unexpected user behavior, or conversion drops before they affect a larger audience. If all goes smoothly, you can gradually expand the test.

Using Tools to Track and Adjust Experiments

Once your experiment is live, real-time monitoring becomes essential. Pricing experiments can veer off course quickly, so waiting until the end of a multi-week test to review results can be risky. Keep an eye on leading indicators like trial-to-paid conversion rates and support ticket volume. For instance, a spike in inquiries like “Why is this so expensive?” or an early drop in conversions are red flags that need immediate attention.

Set up dashboards to track metrics like conversion rates, average revenue per user (ARPU), and Net Promoter Score (NPS) in real time. Tools like Stripe Billing make it easier to manage multiple price IDs for the same product, allowing you to randomize customer exposure while maintaining clear reporting. Optimizely is another great tool for ensuring your sample sizes are large enough and your results statistically significant. That said, tools alone can’t replace human judgment. You’ll need to weigh the data - like a small dip in conversions versus a meaningful boost in ARPU - and make decisions accordingly. Partnering with experts who understand both the technical and strategic sides of pricing can be a game-changer. Artisan Strategies, for example, offers support for analytics setup and iterative testing.

"Pricing is the most important lever that SaaS companies have, but almost none of them systematically optimize it."

– Patrick Campbell, CEO of ProfitWell

Analyzing Results and Implementing Changes

Carefully interpreting your data before solidifying changes is crucial. This step often determines whether a company achieves pricing success or risks revenue losses and customer dissatisfaction.

How to Evaluate Experiment Results

First, ensure your results reach a 95% confidence level and confirm that any revenue gains justify the complexity of the experiment. Beyond statistical significance, think about economic value: does the additional revenue outweigh the operational challenges of adopting the change?

Stay focused on metrics that align with your initial goals. For example:

- If revenue growth was your goal, track Average Revenue Per User (ARPU) and Lifetime Value (LTV).

- If retention was the priority, monitor churn rate and renewal rates closely.

It’s also important to break down results by customer segments. Aggregated data can hide critical variations. For instance, SMB and Enterprise customers often show dramatic differences in price sensitivity, sometimes varying by 3–5×.

Don’t overlook qualitative feedback. A sudden surge in support tickets with questions like, “Why is this so expensive?” could signal a perception problem, even if revenue temporarily rises. Combining this feedback with quantitative metrics helps maintain customer trust. Companies that excel in pricing strategies tend to outperform their peers by 25% in total shareholder returns. On the flip side, 98% of SaaS companies that struggle with pricing experiments lack clear success metrics from the beginning.

Be cautious of short-term wins - they can often hide long-term challenges. In fact, 43% of positive short-term results show diminishing returns when examined over 12 months or more. To avoid this, run your experiment for at least one complete business cycle (30–90 days) to capture recurring patterns and billing cycles. Tag participants with cohort identifiers (e.g., pricing_exp_v1_variant) so you can monitor their LTV and churn over the next 6–12 months.

Once you’ve validated your findings, reduce risk by rolling out changes gradually.

Rolling Out Changes in Stages

After confirming that your pricing experiment delivers both statistical and economic benefits, the next step is a phased rollout. This controlled approach significantly reduces risk - companies that adopt phased rollouts report 15–20% fewer negative customer reactions.

Start by grandfathering existing customers. Apply new pricing only to those who sign up after a specific date. This protects your current revenue base and preserves brand loyalty. A great example is HubSpot, which provided existing customers with a 12-month notice period during a pricing restructure. This approach minimized churn, even with significant price increases.

You can also roll out changes selectively by:

- Geographic region

- Customer acquisition channel

- Industry vertical

This strategy prevents direct price comparisons and allows you to fine-tune for local market conditions .

Set guardrail metrics ahead of time. Establish clear stop conditions - like a 30% drop in conversions or a spike in support tickets - that would trigger an immediate rollback. Ensure your sales and support teams are well-prepared with scripts and training to handle customer concerns about pricing changes. Internal communication is key: pricing changes that are clearly explained to internal teams show a 22% higher success rate.

"A pricing win that eventually results in dissatisfaction isn't really a win."

– Stripe

Conclusion

Sticking to the strategies outlined earlier, disciplined pricing experiments can help businesses grow while keeping customer loyalty intact.

When done right, pricing experiments can increase revenue without eroding trust. Think of pricing as a dynamic process that adapts alongside your customers and market trends. Research shows that companies running well-planned pricing experiments often see revenue growth within six months - as long as customer trust remains a priority.

One key principle? Respecting existing prices for current customers. As Krish Subramanian, Co-Founder and CEO of Chargebee, emphasizes:

"Honoring the existing price for customers is a definite rule that you need to follow".

By limiting new pricing changes to future signups, businesses can sidestep the churn risks that come with surprising long-term customers.

Start small - test pricing changes on new users or low-risk groups, and validate packaging adjustments before touching the actual price point. Fine-tune feature bundles and messaging to maximize results while keeping the base price stable. And don’t forget: tracking the right metrics from the outset is crucial. Even a modest 1% improvement in pricing strategy can translate into an 11% profit boost.

Success hinges on data and thorough testing. Stick to statistical rigor - run tests for at least two full sales cycles (6–8 weeks) and act only when results hit a 95% confidence level. Mike Kulakov, Co-Founder of Everhour, sums it up perfectly:

"Pricing doesn't just decide your revenue. It decides who your customers are, how they treat you, and what kind of company you build".

Ultimately, pricing should be treated as an ongoing effort. The most successful companies test carefully, communicate openly, and prioritize customer retention. After all, a 5% increase in customer retention can drive profit growth of 25% to 95%. Keep refining, and you’ll not only grow revenue but also strengthen the trust that keeps customers coming back.

FAQs

How do I pick the safest customers to test new pricing on?

To test new pricing strategies without upsetting your customer base, it’s smart to segment your audience. Start with groups that are less likely to react strongly - like your most loyal or highly engaged users. These customers are often more open to price changes because they see greater value in your product or service.

When creating these segments, consider factors like their willingness to pay, usage habits, and the value they perceive in your offering. By focusing on these groups, you can run controlled experiments, closely monitor their responses, and tweak your pricing approach - all while minimizing the risk of losing customers.

How long should a pricing test run to avoid misleading results?

When running a pricing test, it’s crucial to allow enough time to gather data that’s statistically reliable. This typically means letting the test run for one to two full business cycles or until customer behavior becomes consistent. While many experts recommend a minimum of 1-2 weeks, the ideal duration depends on factors such as how much traffic your site gets and the size of the customer segment you're analyzing. Keep an eye on key metrics like conversion rate and revenue - once they stabilize, you’ll have a clearer picture for making decisions.

What’s the fastest way to spot churn risk during a pricing experiment?

To keep your customers happy and prevent churn, it’s crucial to keep an eye on their behavior and engagement metrics in real time. Look out for warning signs like a drop in usage, fewer logins, or cancellations within particular customer segments. Features such as plan overrides and customer metadata can help you monitor how customers respond to changes without interfering with their billing process. By relying on these metrics and statistical benchmarks, you can quickly spot dissatisfaction or retention challenges during testing phases.

Go deeper than any blog post.

The full system behind these articles—frameworks, diagnostics, and playbooks delivered to your inbox.

No spam. Unsubscribe anytime.