Balancing User Engagement and Ethics in SaaS

SaaS should protect user attention by ditching dark patterns, measuring value over time, and designing products that help people finish tasks and move on.

Balancing User Engagement and Ethics in SaaS

Designing SaaS products often prioritizes keeping users engaged, but this approach can harm user well-being. Many platforms use tactics like infinite scroll, autoplay, and deceptive patterns to maximize time spent, even at the expense of user autonomy. These strategies fuel profits but leave users feeling trapped, distracted, and dissatisfied.

There’s a better way: Digital Sanctuaries. These are software environments that respect user attention and help people achieve their goals without exploiting them. Key principles include:

- Prioritizing user control: Avoid manipulative design and give users clear choices.

- Respecting attention as limited: Use stopping cues and avoid addictive loops.

- Minimizing data collection: Be transparent about privacy and collect only what’s necessary.

- Measuring value, not engagement: Track task completion and outcomes instead of time spent.

The Engagement-Ethics Conflict in SaaS

Why Engagement Became the Primary Metric

The rise of engagement as the go-to metric for success wasn’t random - it was driven by profit motives. In the attention economy, platforms realized that keeping users engaged for longer sessions meant more opportunities to show targeted ads, increasing revenue [3].

Silicon Valley’s obsession with growth played a huge role in this shift. Early on, SaaS companies focused on gaining users at any cost, prioritizing market share over immediate returns. But once growth slowed, the focus shifted to squeezing as much revenue and attention as possible from existing users. Metrics like Daily Active Users (DAU), Monthly Active Users (MAU), and session duration became the benchmarks for success. Yet, these numbers don’t reflect whether users actually achieved their goals or found value in their time spent [3].

Technology advancements made these tactics easier to implement. For instance, between 2011 and 2014, LinkedIn accessed users' contact lists and sent invitations that looked like they came directly from the user - without their consent. This led to a class-action lawsuit [6]. Similarly, Hotmail drove viral growth by automatically attaching the message "Get your free email with Hotmail" to every outgoing email, turning users into unintentional marketers [6]. These strategies worked so well they became standard practice across the industry, but they also sparked debates about ethics, paving the way for concepts like Digital Sanctuaries, which aim to respect user intent.

The Ethical Problems of Engagement-Driven Design

To keep users engaged, many platforms resort to tactics that blur ethical boundaries. These strategies generally fall into four categories: pressuring (creating social obligations), enticing (offering unpredictable rewards), lulling (promoting mindless, low-effort interactions), and trapping (making it hard to leave) [3].

Features like infinite scroll, autoplay, and constant notifications are designed to eliminate natural stopping points, creating dopamine-driven loops that leave users unsatisfied [4][7]. The use of intermittent rewards - similar to what makes slot machines addictive - keeps users in a cycle of wanting more but never feeling fulfilled [4][7].

The impact of these designs is clear. A survey of 183 college students found that 44% felt their smartphones negatively affected their academic or professional lives [4]. And in June 2023, the FTC sued Amazon for deliberately making it difficult to cancel Prime subscriptions. This tactic, called "obstruction", increased the effort required to locate and use the cancellation option [2][6].

"Design has been weaponized using behavioral research to serve the aims of the surveillance economy." - Association for Computing Machinery [6]

The problem is that these manipulative designs often go unnoticed. Interfaces are created to fade into the background, making it hard for users to spot the ways they’re being influenced. Instead of recognizing these tactics, users often blame themselves for a lack of self-control. This highlights the need for a shift toward design principles that prioritize user autonomy and well-being.

What Are "Digital Sanctuaries"?

Digital Sanctuaries aim to counteract the manipulative strategies of engagement-driven design by focusing on user goals. Instead of optimizing for endless screen time, these platforms prioritize helping users complete tasks efficiently and reclaim their time. They treat attention as a precious resource, not something to exploit.

This doesn’t mean making software less effective - it means cutting out the noise. Digital Sanctuaries remove the layers of manipulation that have been added over years of engagement-focused design. The guiding principle is simple: software should serve the user’s needs, not the company’s bottom line. While traditional SaaS asks, “How do we keep users engaged longer?”, Digital Sanctuaries ask, “How do we help users achieve their goals and move on with their day?”

The difference is striking. Traditional SaaS reduces friction to encourage endless scrolling, but Digital Sanctuaries intentionally add friction to support mindful disengagement [6]. They make limits a feature, not a flaw. At Artisan Strategies, our products - Onsara, Mochi, and Sutta 423 - are built around this philosophy. They’re designed to foster focus, create meaningful rituals, and encourage what we call "cozy" consumption, offering a refreshing alternative to the constant noise of the digital world.

Core Principles for Ethical Engagement Design

Put User Agency First

Ethical engagement starts with giving users control. When Docparser introduced its "SmartAI Parser" and "ResumeAI Parser" in 2024, they made participation completely optional. These AI tools were clearly labeled, and users could customize data retention settings - ranging from immediate deletion to storage for up to 180 days. Lindsay Thompson, General Manager at Docparser, highlighted their approach: "We see responsible AI not just as a safeguard, but as a way to build trust while delivering meaningful innovation" [10].

Respecting user agency isn’t just the right thing to do - it also makes good business sense. Trust leads to stronger customer relationships, which in turn drive higher lifetime value, reduced churn, and better conversion rates from free to paid plans [8][13]. Transparent design fosters loyalty, while deceptive tactics often alienate users, resulting in higher attrition.

To avoid manipulative practices, eliminate dark patterns. For example, use active opt-ins instead of pre-checked boxes, and make canceling a subscription as easy as signing up for one. As Ioana Wilkinson, a business strategist, puts it: "Innovation without responsibility is a risky game. Embed ethical considerations into your product development process to build trust and credibility with your users" [8].

Another critical aspect of ethical design is respecting the most valuable resource users have: their attention.

Treat Attention As a Limited Resource

Attention is finite, and ethical engagement design recognizes this. Shifting the focus from engagement metrics to value-based outcomes starts by acknowledging that more time spent on a platform doesn’t necessarily mean a better experience. In fact, companies lose about 10% of their highly engaged users each month, showing that engagement alone doesn’t guarantee loyalty or reduce churn [11].

A good rule of thumb? Design interactions you wouldn’t mind experiencing yourself [5]. This could mean replacing infinite scrolling with clear stopping points, ensuring notifications are meaningful, and avoiding the addictive reward systems often found in apps [12]. As the Designing Mindfulness Principles emphasize: "Human attention is too often peripheral in the race for driving sales and advertising revenue. It is important to recognise that the way you design your products has an impact on the human mind and mental wellbeing" [12].

Simple changes can make a big difference. Add features like "You're all caught up" messages, disable auto-play, and avoid creating reward loops that encourage compulsive behavior [9][12]. By stripping away distractions and focusing on what users truly need, you create a better, more respectful experience. And this naturally ties into the importance of transparent and minimal data practices.

Use Minimal and Transparent Data Practices

Building trust starts with privacy-by-design. Data minimization means only collecting what’s absolutely necessary for your service, rather than hoarding data "just in case" it might be useful later [8]. Use granular consent options that separate permissions for analytics and personalized ads. Bundling everything into a single consent request isn’t just questionable - it’s unethical.

Make it easy for users to withdraw consent. Data controls should be simple to find, perhaps in a settings menu or through a visible icon - not hidden in lengthy terms and conditions [14]. When users revoke consent, treat it as a request to erase their data. For non-essential purposes, opt for session-based storage instead of persistent tracking.

The way you communicate matters too. Avoid manipulative language like "No thanks, I don’t like saving money", which pressures users into decisions that benefit the company [2]. Instead, provide clear, neutral explanations about what data you’re collecting and why. This commitment to transparency reinforces the principle of user-centered design.

Test privacy notices with real users to ensure they’re easy to understand. As Sage Cheng, Head of Design and Creative Production at Access Now, explains: "Manipulating how people navigate a website or app is a choice" [14]. Choosing transparency builds trust and aligns with ethical design principles.

[ENG] Ethics in Digital Design: Making informed and responsible decisions | Alexandra Mihai

::: @iframe https://www.youtube.com/embed/E-v5txvqniY :::

How to Design Digital Sanctuaries

Creating digital sanctuaries means rethinking how users interact with technology, focusing on helping them disconnect meaningfully and regain control over their time.

Build in Stopping Cues and Clear Endpoints

Many apps pull users into a loop of endless scrolling, making it hard to step away. Digital sanctuaries, on the other hand, are designed to help users complete tasks and leave with ease. This endless-scroll trap, often called "The Vortex", turns a quick check-in into an unplanned time sink that undermines user control [15]. Features like infinite scroll and autoplay remove natural stopping points, making disengagement nearly impossible [12].

To counter this, design with clear endpoints in mind. For instance, display a "You're all caught up" message when there's no more content to browse. Turn off autoplay by default. These small changes let users disengage with a sense of accomplishment rather than guilt. As the Designing Mindfulness Principles suggest, create experiences that allow users to step away feeling calm and productive, not like they've wasted their time [12].

Use Ritual-Based Flows Instead of Addictive Feeds

Ritual-based flows offer users a focused, intentional experience, steering clear of the endless, addictive content loops found in many apps. This approach emphasizes guiding users through meaningful, finite tasks rather than trapping them in a cycle of consumption [12].

Take Sutta 423 as an example. It presents one Buddhist verse per day - no endless scrolling, no algorithm-driven feeds. You read the verse, reflect on it, and move on. The simplicity isn’t a limitation; it’s the goal. By restricting content, users can engage more deeply. This method aligns with the concept of "information zones", where users retain full control over their engagement without being bombarded by notifications or manipulative prompts [12].

By incorporating structured rituals, this design approach enhances focus and promotes a healthier interaction with technology.

Make Constraint a Feature

Many software tools suffer from feature overload, diluting their purpose and overwhelming users. Digital sanctuaries focus on eliminating unnecessary features, protecting user attention and reducing decision fatigue. As Neil M. Richards and Woodrow Hartzog point out, the traditional model assumes "more engagement equals more ads watched equals more revenue" [1]. Constraint-based design challenges this by prioritizing simplicity and focus.

Consider Onsara, a focus app for individuals with ADHD. Instead of trying to be a jack-of-all-trades - handling project management, calendars, notes, and habits - it does one thing well: helping users decide what to work on and start immediately. Similarly, the PDF Reader focuses solely on letting users read PDFs in their browser. No annotations, no cloud syncing, no unnecessary extras - just the document and the user.

This philosophy is often referred to as "Quiet SaaS", where success comes from technical craftsmanship and reliability rather than aggressive marketing or viral trends [16]. As Jakub Jirak says, "Simplicity isn't just elegance; it's survival" [16]. By excelling at one task, these tools build trust and foster sustainable growth, offering genuine value without resorting to manipulative tactics.

How to Implement Ethics in SaaS Development

::: @figure  :::

:::

Creating ethical SaaS products doesn’t have to mean adding layers of unnecessary complexity. Instead, it’s about rethinking how you define success, test features, and review designs - all while keeping user well-being at the center. Start by shifting your focus to metrics that reflect real user value.

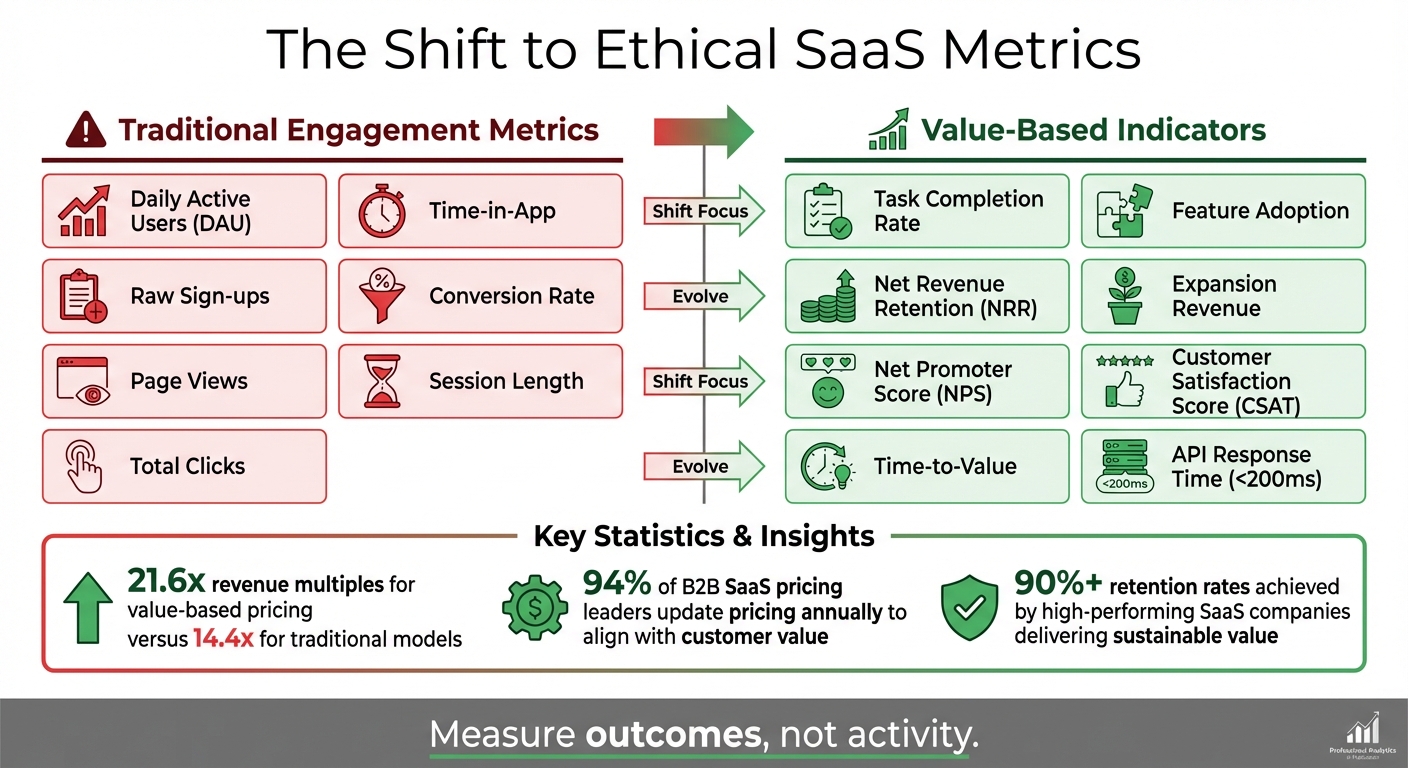

Replace Engagement Metrics with Value Indicators

Many SaaS companies rely on metrics like daily active users (DAU), session length, or time spent in-app. While these numbers might look impressive, they often incentivize practices that waste users’ time rather than help them achieve meaningful results. A better approach? Measure outcomes, not activity.

For instance, instead of tracking how long users stay logged in, measure how quickly they can complete their key tasks. If you’re building a project management tool, prioritize metrics like "time-to-first-task-completion" over "total minutes logged in." Similarly, focus on task completion rates or feature adoption rather than raw engagement numbers.

Value discovery interviews can help uncover what users truly care about. Talk to your customers and ask them to quantify the benefits they see from your product. For example, if your tool reduces reporting time by 20%, that’s a tangible metric worth tracking - unlike vague descriptions like "automated reporting" [19].

The numbers back this up. SaaS companies that use value-based pricing models - where costs align with the value delivered - are valued at 21.6x revenue multiples, compared to 14.4x for traditional subscription models [19]. By charging based on outcomes rather than time or seats, you’re forced to measure what really matters.

"Focus on customer success. Creating a solid customer success capability that ensures the customer achieves the desired outcomes is necessary for value-based growth success." - Krzysztof Szyszkiewicz and Jakub Jukowski, Valueships [17]

Here’s a comparison of what to track:

| Traditional Engagement Metrics | Value-Based Indicators |

|---|---|

| Daily Active Users (DAU), Time-in-App | Task Completion Rate, Feature Adoption [18][20] |

| Raw Sign-ups, Conversion Rate | Net Revenue Retention (NRR), Expansion Revenue [18][19] |

| Page Views, Session Length | Net Promoter Score (NPS), Customer Satisfaction Score (CSAT) [17][20] |

| Total Clicks | Time-to-Value, API Response Time (<200ms) [18][20] |

This shift isn’t just a nice idea - it’s becoming standard practice. Over 94% of B2B SaaS pricing leaders now update their pricing annually to ensure it aligns with customer value [19]. High-performing SaaS companies with retention rates above 90% achieve this by delivering sustainable value, not by relying on tricks to keep users hooked [20].

Once you’ve adopted value-based metrics, the next step is to ensure your features are tested ethically.

Run Ethical Experiments and Harm Checks

With value-driven metrics in place, it’s time to evaluate whether your features genuinely benefit users. A/B testing, while useful, can easily veer into unethical territory when it prioritizes conversions over user well-being. For example, a 2019 study found that over 10% of popular e-commerce websites used deceptive design patterns, and 95% of trending apps in the Google Play store contained similar practices - some with as many as seven manipulative patterns [2].

The issue isn’t A/B testing itself but what you’re testing for. Use an amended cognitive walkthrough to assess your designs. Walk through each feature while asking questions like:

- Does this require explicit user consent?

- Could this financially harm a user?

- Does it rely on emotional manipulation?

Consider your most vulnerable users - those who may have limited time or digital literacy - as you evaluate potential harm [2].

Be on the lookout for sludge, or unnecessary friction that makes it harder for users to act in their best interest. For example, if canceling a subscription requires multiple confirmation screens and a phone call, but signing up is a one-click process, that’s sludge. Similarly, if the “No thanks” button is gray and tiny while the “Subscribe” button is bright and prominent, it’s an example of obstruction [2].

"A deceptive pattern is a design pattern that prompts users to take an action that benefits the company employing the pattern by deceiving, misdirecting, shaming, or obstructing the user's ability to make another (less profitable) choice." - Maria Rosala, UX Specialist [2]

Pay special attention to confirmshaming, where interface copy guilts users into making a particular choice. Phrases like "No thanks, I don’t like saving money" are manipulative and erode trust [2].

To avoid these pitfalls, consider using tools like the Digital Ethics Compass, which provides a checklist of ethical questions across areas like data usage, automation, and behavioral design [21]. Incorporate these checks during development to catch issues early.

Once you’ve validated your features through ethical testing, formalize the process with lightweight team reviews.

Set Up Simple Ethics Reviews for Small Teams

You don’t need a full-fledged ethics department to create ethical products. Small teams can implement straightforward reviews that identify potential issues without bogging down development timelines. These reviews ensure that user well-being remains a priority throughout the process.

Involve a mix of team members - designers, developers, and product managers - to bring diverse perspectives to the table [21].

"Many of these stories start with a reasonably ordinary designer who makes everyday design decisions." - Digital Ethics Compass [21]

Appoint a well-being advocate within your team. This doesn’t have to be a dedicated role - just someone who consistently asks, “Is this in the user’s best interest?” during product discussions. Including well-being metrics in leadership KPIs can also help ensure ethical considerations aren’t sidelined when deadlines loom [12].

The Danish Design Center suggests memorizing five core principles of responsible design and applying them throughout the development process [21]. When ethics become second nature rather than an afterthought, potential problems are easier to catch early.

Consider holding periodic workshops (1–3 days) to assess your team’s ethical practices and refine existing solutions [21]. These don’t have to be formal or expensive - just gather your team, review your product with fresh eyes, and ask tough questions about whether each feature serves users or exploits them.

Finally, remember that ethical design isn’t just about doing the right thing - it also reduces legal risks. Many deceptive practices are now illegal under regulations like GDPR and CCPA [2][22]. By embedding ethics into your process, you’re protecting both your users and your business.

A Framework for Making Ethical Decisions

To align growth with user well-being, use a structured approach grounded in value-based metrics and ethical tests. A straightforward, three-step framework can guide you effectively.

Common Tradeoffs in SaaS Engagement Design

Balancing revenue and ethics is a challenge that often reveals itself in predictable ways. The numbers behind deceptive practices are alarming. For instance, a 2019 study of 11,000 popular ecommerce sites found that over 10% employed deceptive design patterns [2]. Other research confirms that these practices are widespread across industries.

But these aren’t just ethical missteps - they’re risky business strategies. Regulations like GDPR and CCPA are cracking down on deceptive patterns, and users are increasingly turning to tools like ad-blockers and tracking protection. This erodes trust and causes long-term harm to brand relationships [2][12][22].

A key distinction lies between persuasion and coercion. Persuasive design uses psychological triggers - like social proof or anchoring - to help users achieve their goals through accurate information. Coercive design, on the other hand, tricks users into actions they wouldn’t normally take [2][24]. To navigate these tradeoffs, adopt a clear three-step decision framework.

A 3-Step Ethical Decision Framework

When you encounter a design decision that feels ethically tricky, this process can help you determine whether you’re genuinely helping users or exploiting them.

Step 1: Use the Manipulation Matrix

Ask yourself two critical questions: "Would I use this product myself?" and "Does it help users materially improve their lives?" [23]. This framework highlights different approaches. A facilitator - someone who uses their own product and believes it genuinely improves lives - operates in an ethical "sweet spot." If you’re designing features you wouldn’t personally use or that don’t truly benefit users, that’s a warning sign.

Step 2: Conduct the Regret Test

Pose this question to a sample group of users: "If you fully understood how this feature is designed to influence you, would you still take this action?" [24][25].

"If users would regret taking the action, the technique fails the regret test and shouldn't be built into the product... Getting people to do something they didn't want to do is no longer persuasion - it's coercion." – Nir Eyal, Author [24]

Testing with just five users often provides clear insights [24][25].

Step 3: Evaluate Impact on Vulnerable Users

Deceptive patterns disproportionately harm users who are time-poor or less tech-savvy [2]. Before rolling out a feature, ask: "How would this affect someone with limited time or technical knowledge?" If the answer makes you uneasy, it’s time to revisit the design. These ethical safeguards not only inform better decisions but also lay the groundwork for sustainable business growth.

The Business Case for Digital Sanctuaries

By following this ethical framework, companies can create "Digital Sanctuaries" that protect user attention while ensuring long-term business success. Ethical design isn’t just a moral choice - it’s a strategic advantage that builds user trust in an era of tighter regulations [22].

"Ignoring people who regret using your product is not only bad ethics, it's also bad for business." – Nir Eyal, Author [24]

Products that promote healthy habits - what the Manipulation Matrix refers to as "Facilitators" - are more sustainable than those relying on constant novelty and aggressive user acquisition [23]. Designing Digital Sanctuaries that respect attention as a finite resource and provide clear exit points builds trust. This trust translates into reduced churn and higher customer lifetime value [12].

Shifting from engagement metrics to value indicators acknowledges that human attention is often treated as a disposable asset in the race for sales and ad revenue [12]. By offering focused, quieter alternatives to the often chaotic digital landscape, you’re not sacrificing growth. Instead, you’re investing in meaningful, long-term relationships that outlast any short-term gains.

Conclusion

The balance between keeping users engaged and maintaining ethical standards will shape the trajectory of your product. Studies indicate that 88% of users are unlikely to return after just one bad experience [26]. Combine that with increasing regulatory scrutiny, and the cost of manipulative practices is higher than ever. The path forward is clear: prioritize designs that genuinely support user well-being.

Choosing ethical design doesn’t hinder growth - it builds the foundation for it. Focusing on user satisfaction leads to sustainable success. By integrating strategies like stopping cues, value-driven metrics, and ethical audits, you can cultivate trust while driving long-term revenue. Aligning ethical practices with growth isn’t just a moral choice - it’s a smart business strategy for SaaS innovation.

"Design is power. In the past decade, software engineers have had to confront the fact that the power they hold comes with responsibilities to users and to society. In this decade, it is time for designers to learn this lesson as well." – Arvind Narayanan, Associate Professor of Computer Science [6]

This responsibility demands immediate action. Start small: evaluate one feature this week using the three-step ethical decision framework. Ask yourself: Would I use this feature personally? Would users regret it if they fully understood it? How might it impact vulnerable groups? These questions can steer you toward designs that serve people - not just metrics.

FAQs

::: faq

What are Digital Sanctuaries, and how are they different from traditional SaaS platforms?

Digital Sanctuaries are a new wave of SaaS tools designed with a focus on simplicity, calm, and intentional use. Unlike traditional platforms that bombard users with endless features and notifications to maximize engagement, these tools prioritize a distraction-free, peaceful experience. By keeping interfaces minimal and avoiding unnecessary complexity, Digital Sanctuaries create a space where users can work or interact without feeling overwhelmed.

Most conventional SaaS platforms are built to keep users hooked, relying on tactics like persuasive design and frequent feature updates. While this approach might boost engagement, it often leads to digital fatigue and a sense of tech overload. Digital Sanctuaries, on the other hand, take a different path. Tools like Onsara, Mochi, and Sutta 423 from Artisan Strategies focus on mindful interaction, placing user well-being above the relentless pursuit of growth. It's a refreshing shift in how we think about technology. :::

::: faq

How can SaaS companies prioritize user value over engagement metrics when measuring success?

To genuinely serve users, SaaS companies should focus on metrics that highlight meaningful outcomes and satisfaction, rather than just tracking clicks or time spent. For instance, measuring task completion rates, user-reported well-being, and Net Promoter Scores (NPS) can offer a more accurate picture of the product's impact. Introducing tools like a "regret test" - where users share if they felt their time was well spent - can also help uncover and address manipulative design practices.

Other valuable metrics might include monitoring intentional usage patterns, such as time spent in focus modes, thoughtful acceptance of notifications, and a reduction in compulsive behaviors. By prioritizing metrics that support user autonomy and long-term trust, companies can underscore their dedication to ethical design while providing genuine benefits. For apps like Onsara, Mochi, and Sutta 423, success could be seen in how often users complete focus sessions, avoid regret-inducing interactions, and experience a sense of calm or comfort while engaging with the platform. :::

::: faq

How can small SaaS teams design products that are both engaging and ethical?

Small SaaS teams can make ethical design a core part of their development process by weaving it into every step. Begin with an ethical impact assessment to pinpoint potential risks, such as privacy violations, loss of user control, or unintended psychological effects. Once identified, tackle these risks with practical solutions like clear and straightforward consent processes, collecting only the data you truly need, and providing terms that are easy to understand.

Steer clear of manipulative design tactics by scrutinizing your interfaces for deceptive elements, like hidden fees or default settings that trick users. Instead, aim for honest, user-friendly designs. A good rule of thumb? Ask yourself if users might regret their choices because the design led them astray.

Incorporating diverse viewpoints into your design process and running regular usability tests can help uncover issues you might have missed. Handle user data with care, prioritizing privacy and building trust. By making these practices part of your routine, even a small team can craft SaaS products that are not only effective but also ethically sound. :::

Go deeper than any blog post.

The full system behind these articles—frameworks, diagnostics, and playbooks delivered to your inbox.

No spam. Unsubscribe anytime.